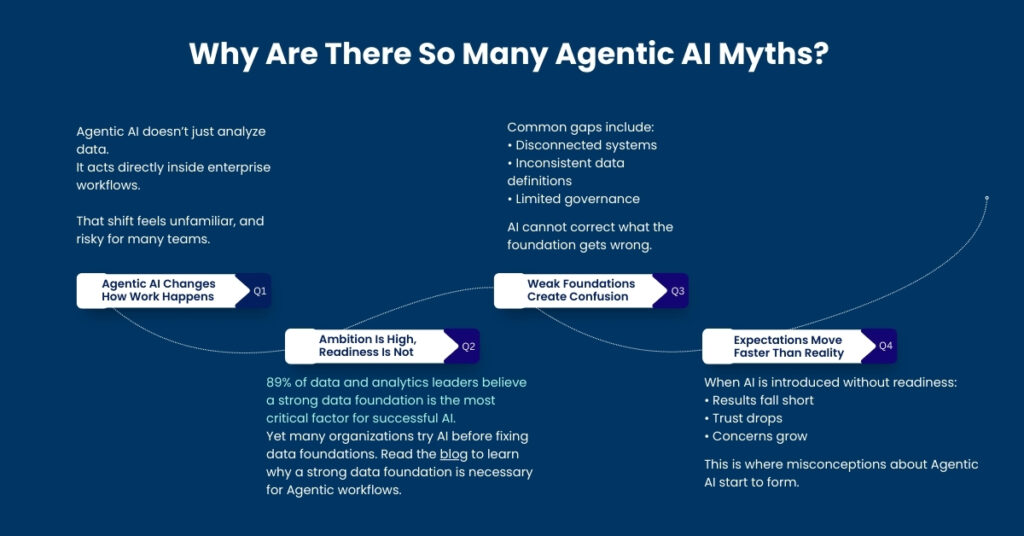

Agentic AI is gaining rapid attention, but it’s also surrounded by questions, expectations, and confusion.

From fears of loss of control to assumptions of instant results, Agentic AI myths are shaping decisions more than facts. In reality, organizational readiness, especially data quality, accessibility, and trust, is repeatedly cited as the biggest barrier to successful AI deployment. In fact, Salesforce research shows that just 11% of CIOs Have fully implemented AI as data and security concerns hinder adoption.

This gap between innovation and adoption highlights real Agentic AI risks: deploying advanced systems on shaky data foundations can lead to misleading insights, unreliable actions, and eroded trust.

This blog is designed to educate.

We’ll break down the most common misconceptions, explain the real agentic AI risks, and clarify what enterprises need to get right before expecting value.

Table of Contents

ToggleWhy Are There So Many Agentic AI Myths?

Enterprises are moving fast to adopt Agentic AI, yet many are still catching up on what it truly requires. When foundational gaps exist, assumptions replace understanding, and Agentic AI myths spread faster than value.

Myth #1: Will Agentic AI Replace Human Teams?

This is the most widespread, and most misleading of all Agentic AI myths.

The fear usually comes from comparing Agentic AI to full automation. But that comparison misses the point. The truth is that, Agentic AI is designed to support human teams, not replace them.

Here’s how:

- Observes signals across systems

- Coordinates actions at speed

- Escalates decisions when human judgment is required

People remain accountable for strategy, priorities, and relationships. They decide what success looks like, which trade-offs matter, and how customers, partners, and teams should be engaged. Organizations that use AI to augment human teams report higher trust, adoption, and long-term value from AI initiatives.

Myth #2: Can Agentic AI Be Switched On Instantly?

Many people assume Agentic AI delivers results the moment it’s switched on. This is one of the most expensive misconceptions about Agentic AI. However, Agentic AI only performs as well as the data, processes, and governance behind it—when those foundations are weak, outcomes suffer.

Agentic AI depends on:

- Clean data

- Standardized definitions

- Aligned workflows

Without these foundations, AI can’t see the full picture. It either pauses because signals don’t align, or it acts on partial information, making decisions that are technically correct but operationally wrong. In both cases, uncertainty grows, and agentic AI risks increase instead of being reduced.

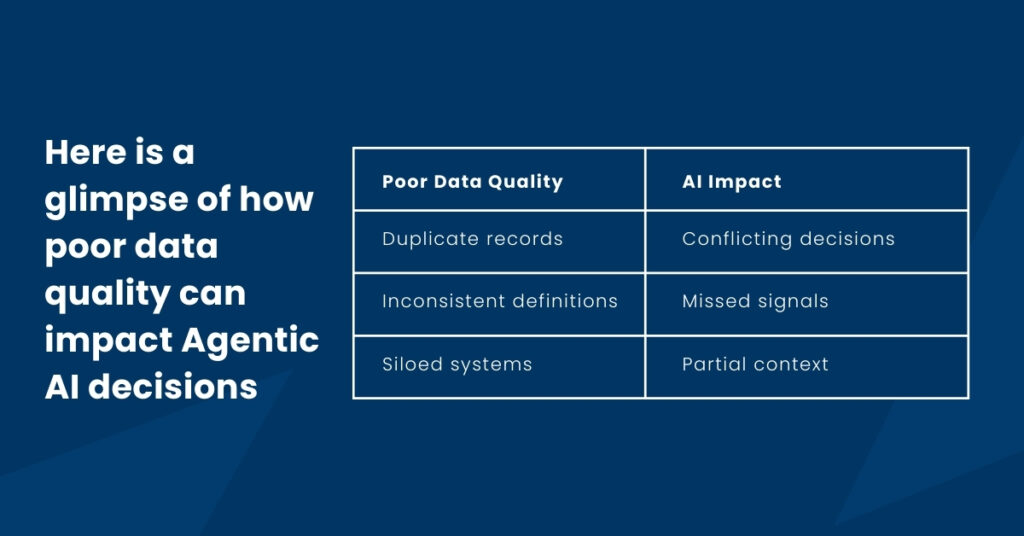

Myth #3: Does More Data Reduce Agentic AI Risks?

More data can feel reassuring, but in practice it often adds confusion.

This is one of the most misunderstood agentic AI risks. When data is duplicated, inconsistent, or disconnected, AI agents spend more time filtering noise than recognizing real signals.

Agentic AI delivers value only when data is relevant, aligned, and connected; so decisions are based on clarity, not volume.

Strong AI outcomes don’t come from collecting more data. They come from having the right data-clean, connected, and easy to interpret. Clarity gives AI the context it needs to act with confidence, while excess or unstructured data only slows decisions down.

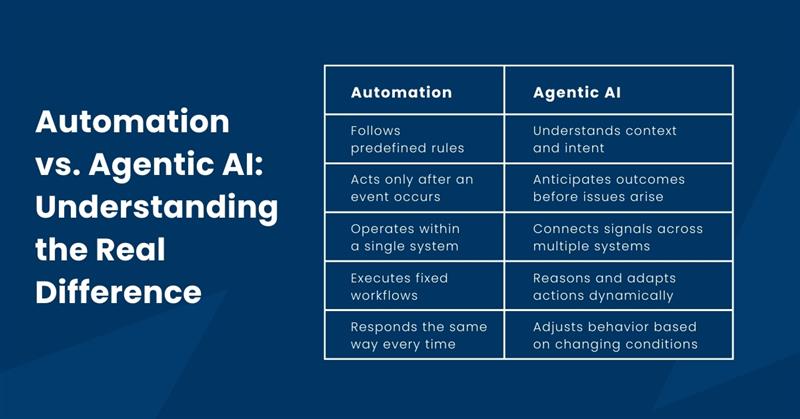

Myth #4: Isn’t Agentic AI Just Smarter Automation?

This is a common misconception; and it oversimplifies what Agentic AI does.

Traditional automation follows predefined rules. Something happens, and the system reacts. It does not understand why the event occurred or what might happen next. Agentic AI works differently. It observes patterns across systems, reasons about cause and effect, and acts before problems escalate.

The key difference is intent and awareness.

Automation might reroute a case after an SLA is breached. Agentic AI notices rising case volume, slowing responses, and sentiment shifts; and acts before the breach happens.

By connecting Salesforce, Certinia, finance, and operations, Agentic AI understands how events in one area affect another. That shared context allows it to anticipate outcomes, not just respond to them.

Related AblyPro blog:

From Automation to Autonomy: Building the Agentic Enterprise with AI

Myth #5: Are Agentic AI Risks Too High for Enterprises?

This concern is understandable but often misunderstood. The biggest Agentic AI risks rarely come from the AI itself. They come from weak foundations like unclear data ownership, poor governance, and inconsistent processes.

Agentic AI acts inside real workflows. Without guardrails, even accurate insights can lead to hesitation or misaligned actions.

That’s why governance is essential.

What Effective Agentic AI Requires

- Strong access controls

- Clear usage policies

- Continuous oversight

When these controls are in place, AI-driven decisions often become more predictable than manual, experience-based ones. Instead of relying on intuition or delayed reports, teams act on consistent, data-backed signals.

What Should Enterprises Learn from This?

Most agentic AI myths pull attention away from what actually determines success.

The technology is rarely a limiting factor. The foundation is!

Agentic AI performs well only when it can rely on data it understands and trusts.

That means data must be:

- Clean

Duplicate records, gaps, or outdated entries confuse AI agents and weaken decisions.

- Standardized

When teams use different definitions for the same metrics, AI cannot reason consistently.

- Connected

Agentic AI needs context across service, projects, finance, and customer success—not fragmented views.

- Governed

Clear access controls and usage rules ensure AI acts predictably and responsibly.

Without data trust, AI hesitates or produces cautious, limited outcomes.

With clarity and alignment, Agentic AI acts earlier, more confidently, and with better business context.

AblyPro helps enterprises clean, standardize, and connect Salesforce and Certinia data, building the foundation Agentic AI needs to deliver reliable, real-world impact.

The Final Takeaway

Agentic AI isn’t risky by nature; misconceptions about Agentic AI are!

Throughout this blog, we’ve seen that most agentic AI risks don’t come from autonomy or intelligence. They come from unclear data, disconnected systems, and unrealistic expectations. When those gaps exist, myths take over and results fall short.

.With the right data foundation, AI supports teams, surfaces risks earlier, and enables smarter, more predictable decisions.

Move past the agentic AI myths!

Start with clarity, not hype; and build the foundation that turns intelligence into trusted outcomes.

Let’s start with your data.

Author

AVP, AblyPro

Murali is the AVP – Certinia at AblyPro with 12+ years of experience in handling complex Certinia and Salesforce applications, implementations, configurations, and customizations. At AblyPro, he has been the pillar of all the Certinia PSA and ERP project deliverables, ranging from design to implementation, project management, and resource management. With years of practical knowledge and expertise in this industry, Murali supports the sales team in strategizing customer solutions to meet the actual business needs of the clients. Murali is a dynamic and experienced professional with multiple Certinia and Salesforce certifications, helping businesses to technically strive in this ever-changing landscape.